Building an AWS Troubleshooting Guide Generator with Amazon AgentCore

Why I built this

Troubleshooting AWS issues often means jumping between documentation, blog posts, internal runbooks, and a fair amount of tribal knowledge. Even experienced engineers end up repeating the same diagnostic steps across services, often rediscovering the same failure modes over and over again.

What frustrated me wasn’t a lack of information — AWS documentation is generally excellent — but fragmentation. The relevant details exist, just not in the order you need them when something is broken and you’re trying to form a mental model quickly. In practice, troubleshooting becomes an exercise in stitching together context from multiple sources while keeping a growing checklist in your head.

I wanted to explore whether Amazon AgentCore could be used to compress that process: take a problem description, reason about likely causes step by step, and produce a structured troubleshooting guide that mirrors how an experienced engineer would approach the issue.

This project is a prototype to test that idea end-to-end.

What this project does

This project implements an AWS Troubleshooting Guide Generator powered by Amazon AgentCore.

Given a natural language problem description (for example, “EC2 instance cannot reach the internet”), the agent:

- reasons about likely failure points based on the symptoms

- selectively queries relevant AWS context

- generates a structured, step-by-step troubleshooting guide with actionable checks

The key distinction is that the output is not a generic explanation or a static checklist. Instead of dumping everything that might be relevant, the agent attempts to prioritize diagnostic steps based on the problem as described.

The goal is not to replace AWS documentation or human judgment, but to reduce time-to-orientation — getting you from “something is broken” to “these are the first few things I should check” more quickly.

Architecture overview

At a high level, the system looks like this:

- Input: Natural language problem description

- AgentCore agent:

- plans a troubleshooting strategy

- reasons through possible failure modes

- selects tools dynamically

- Tools:

- AWS context lookups

- constrained reasoning helpers

- Output: A step-by-step troubleshooting guide generated by the agent and uploaded to an S3 bucket, returned to the user as a pre-signed URL

Under the hood, this is not a single prompt sent to a model. The core of the system is an AgentCore agent with an explicit reasoning loop and a small set of narrowly scoped tools. The agent plans its approach, invokes tools to fetch AWS-specific context where needed, and then synthesizes the final guide based on those intermediate steps.

Persisting the output as a generated artifact (rather than just returning text) makes the system easier to integrate into operational workflows and automation later on.

Why AgentCore?

I considered simpler approaches, such as a single carefully engineered prompt or a retrieval-augmented generation (RAG) setup over AWS documentation. Those approaches can work well for explanations, but they tend to break down when you want repeatable diagnostic behavior.

What stood out to me about AgentCore is its focus on reasoning and tool orchestration, not just text generation. In this project, AgentCore enables:

- explicit reasoning steps that are easier to debug

- controlled and deterministic tool invocation

- more reproducible behavior across runs

The tradeoff is additional complexity. You have to design tools carefully, and debugging an agent requires more upfront thought than tweaking a single prompt. For infrastructure and operational workflows, I found that tradeoff worthwhile.

Repository and code

The full implementation, including setup instructions and examples, is available here:

👉 GitHub repository

https://github.com/sidssn/aws-troubleshooting-guide-generator-example-with-agentcore

The repository includes:

- the agent definition

- tool configuration

- example troubleshooting scenarios

- instructions to run the project locally and invoke agent once deployed to AWS

If you’re looking for a concrete, minimal example of AgentCore applied to a real operational use case, this should be a good starting point.

How to try it yourself

At a high level:

- Clone the repository

- Configure your AWS credentials

- Follow the setup instructions in the README

- Run the agent with a sample AWS issue

You should be able to get a working example running in under 10 minutes.

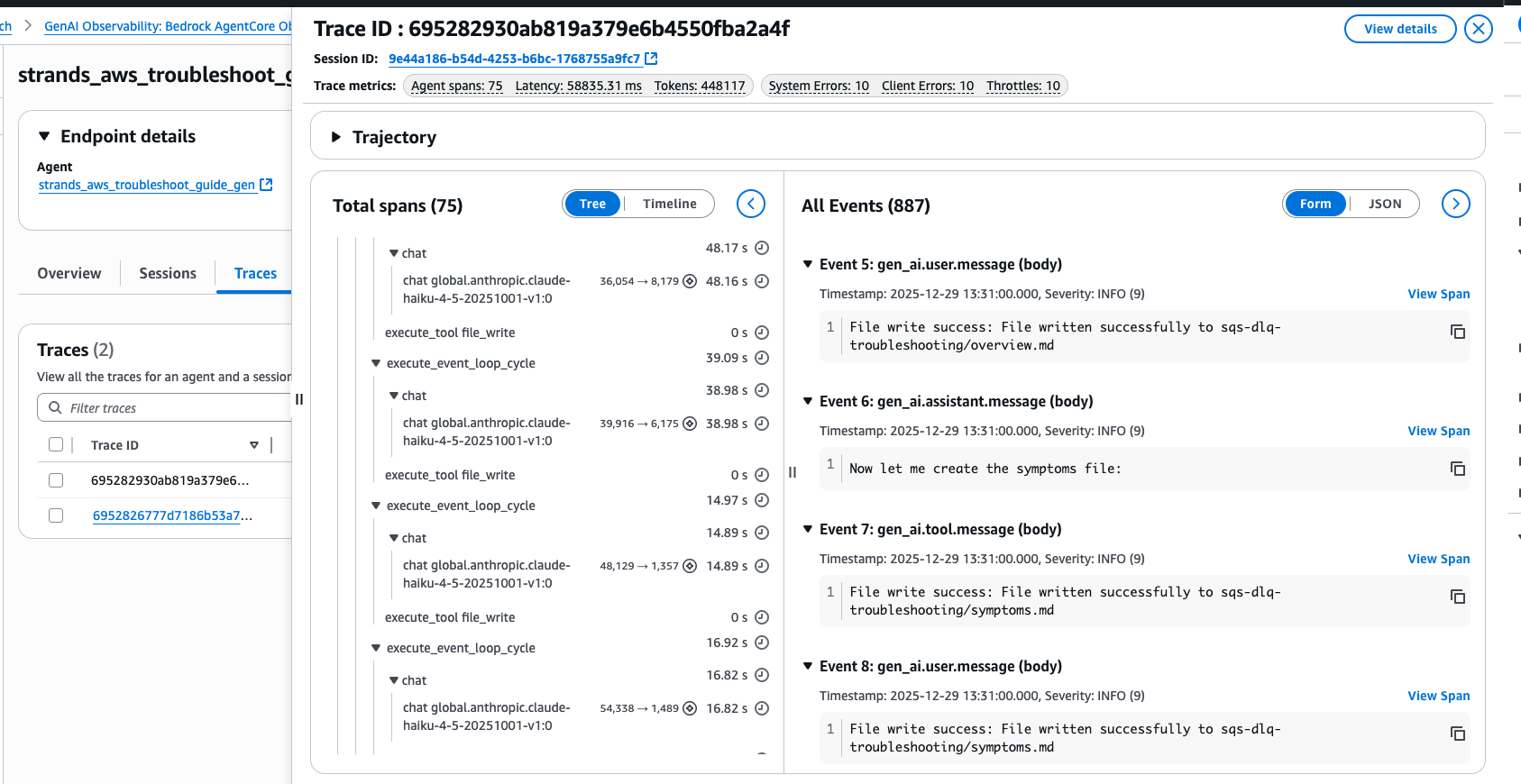

Observability and debugging

One of the biggest advantages of AgentCore over traditional LLM prompting is OOTB - Observability Out-of-the-Box.

All you need to do is enable Transaction Search for your account and that’s it! You can then view your agent runtime traces in AWS CloudWatch under GenAI Observability -> Bedrock AgentCore.

Limitations and failure modes

Like any LLM-driven system, this agent can be confidently wrong.

To reduce that risk, the design intentionally keeps tools narrow and deterministic, favors structured diagnostic steps over free-form advice, and treats the output as a guide, not an authoritative decision-maker.

This does not replace human judgment. Instead, it acts as a force multiplier: helping engineers reach the right questions faster, even if the final validation is still done manually.

I don’t claim this approach is universally better than traditional troubleshooting. Where it shows clear value is in early-stage diagnosis, especially for unfamiliar services or failure modes.

Observability and Metrics for AgentCore Runtime is still evolving. At the time of writing, it is only available in us-east-1 region. As AgentCore matures, I expect the observability story to improve further.

Lessons learned

A few takeaways from building this:

- AgentCore works best when tools are small, focused, and well-defined

- Explicit reasoning steps make debugging agent behavior much easier

- Agentic approaches feel especially promising for cloud operations and DevOps workflows, where decision paths matter as much as final answers

There’s still plenty of room to experiment, but the foundation feels solid.

What’s next

Next, I plan to:

- add more ways to validate the content generated by the agent

- refine the agent’s reasoning strategy based on real-world feedback

- explore improvements/differences after setting up memory in AgentCore

If you’re experimenting with AgentCore or agentic workflows, I’d love to hear what you’re building.

Final thoughts

AgentCore opens up an interesting design space for operational tooling. This project is a small step, but it demonstrates how agentic systems can help reduce cognitive load in complex cloud environments.

If this sounds useful, feel free to try it out or contribute.

Thanks for reading.